The Curious Case of SSE Streaming Agent Responses on AWS Lambda

This article was originally published on the AWS Builder Center.

The Setup

We built a service that runs LLM inference on AWS and streams results back to clients in real-time using Server-Sent Events (SSE). Think of any chat-style LLM application — the user submits a prompt, and tokens appear progressively as the model generates them, rather than waiting for the entire response to complete.

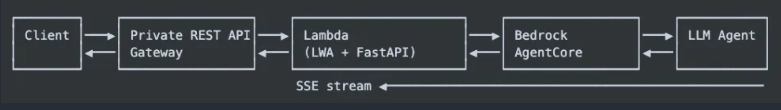

The architecture:

The Lambda function uses Lambda Web Adapter (LWA) — a lightweight adapter that lets you run standard web frameworks like FastAPI inside Lambda without modifying your code. Our FastAPI app listens on port 8080, invokes a Bedrock AgentCore agent, and streams the agent’s response back as SSE events. On the API Gateway side, we use the Lambda /response-streaming-invocations integration URI with streaming enabled — the documented approach for Lambda response streaming through REST API Gateway.

On paper, every piece is configured for streaming. The client should see events arriving every few hundred milliseconds as the model generates tokens. In practice, the testing team reported something different: nothing happens for 8-10 seconds, then every single event arrives in a burst.

Not great for a streaming API.

The Symptom

A typical SSE stream from our agent produces ~240 events over ~16 seconds: progress updates, content deltas (LLM tokens), citations, and lifecycle events. Each event is small — 200 to 500 bytes of JSON.

What the client saw:

1

2

3

4

5

6

7

8

9

10

[00:00.000] ... silence ...

[00:08.200] ... silence ...

[00:13.800] event: stream_start ← everything arrives here

[00:13.801] event: progress

[00:13.802] event: progress

[00:13.803] event: content_block_start

[00:13.804] event: content_block_delta

[00:13.805] event: content_block_delta

... ← 240 events in ~3 seconds

[00:16.900] event: stream_end

The time_starttransfer (TTFB) from curl was consistently 13-14 seconds. The total response was ~80KB. All events had been generated server-side over 16 seconds, but the client received them in a single dump at the end.

The Investigation

Hypothesis 1: API Gateway is buffering

Private REST API Gateway seemed like the obvious suspect. REST APIs don’t natively support streaming the way HTTP APIs do. But our Terraform config was correct:

1

2

integration_type = "AWS_PROXY"

uri = "arn:aws:apigateway:${region}:lambda:path/.../response-streaming-invocations"

The response-streaming-invocations URI is the documented way to enable Lambda response streaming through API Gateway. We verified the integration was wired correctly.

Hypothesis 2: Lambda Web Adapter is buffering

LWA sits between the Lambda runtime and our FastAPI app. Maybe it was holding chunks? We upgraded from LWA 0.8.4 to 0.9.1 (the latest at the time) and tested.

Result: TTFB went from 13.8s to 21.4s. Worse, not better. The upgrade alone changed nothing about the buffering behavior — the slight TTFB increase was likely cold-start variance.

But here’s the key insight: running the same FastAPI app locally (without LWA, without Lambda) streamed perfectly. Events arrived with ~35ms spacing. This ruled out our application code and pointed squarely at the Lambda runtime layer.

Hypothesis 3: Lambda Response Streaming has an internal buffer

This turned out to be the answer.

Lambda Response Streaming has an undocumented internal buffering mechanism. Small chunks (our 200-500 byte SSE events) get held in a buffer and aren’t flushed to the client until either:

- The buffer fills up (~100KB based on our testing)

- The stream ends

There is no flush() API. Unlike traditional web servers where you can force a flush after each write, Lambda’s streaming runtime gives you no control over when buffered data gets sent to the client.

This behavior exists even with Lambda Function URLs (no API Gateway involved at all). It’s a property of the Lambda streaming runtime itself.

The Fix: Padding with SSE Comments

The SSE specification defines comment lines — any line starting with : (colon) is silently ignored by compliant clients. We exploit this to inflate each event past Lambda’s buffer threshold:

1

2

3

4

# SSE comment: ": " + spaces + "\n\n"

# Compliant clients silently ignore these lines

padding_bytes = 100 * 1024 # 100KB

padding = f": {' ' * padding_bytes}\n\n"

Each SSE event gets a 100KB comment block appended to it. Lambda sees a ~100KB chunk, flushes it immediately, and the client receives the event. The client’s SSE parser strips the comment and processes only the actual event data.

The Tiered Padding Strategy

Padding every event with 100KB would balloon a typical 80KB response to ~24MB. That’s wasteful. Through testing, we found that Lambda’s buffer behavior has two phases:

- Initial buffer (~100KB): The first chunks need to be large to “prime” the stream

- Subsequent chunks: Once the stream is flowing, smaller padding keeps it moving

We implemented a three-tier approach:

1

2

3

4

5

class SSEGenerator:

def __init__(self, flush_padding_kb=0, flush_max_events=3, flush_tail_padding_kb=0):

self._flush_padding_kb = flush_padding_kb # 100KB for first N events

self._flush_max_events = flush_max_events # N = 3

self._flush_tail_padding_kb = flush_tail_padding_kb # 10KB for remaining events

- First 3 events: 100KB padding each (breaks the initial buffer)

- Remaining events: 10KB padding each (keeps the stream flowing)

- Local development: 0KB padding (no Lambda, no buffering)

The is_lambda check ensures padding is only applied when running inside Lambda:

1

2

3

4

5

6

adapter = ContentCollectingAdapter(

request_id=session_id,

flush_padding_kb=settings.stream_flush_padding_kb if settings.is_lambda else 0,

flush_max_events=settings.stream_flush_max_events if settings.is_lambda else 0,

flush_tail_padding_kb=settings.stream_flush_tail_padding_kb if settings.is_lambda else 0,

)

The Results

We ran systematic A/B tests, changing one variable at a time:

| Configuration | LWA | Head Padding | Tail Padding | TTFB | Total | Download Size |

|---|---|---|---|---|---|---|

| Baseline | 0.8.4 | none | none | 13.8s | 16.9s | 81KB |

| LWA upgrade only | 0.9.1 | none | none | 21.4s | 24.1s | 78KB |

| All events padded | 0.9.1 | 100KB × all | — | 7.8s | 64.5s | 37MB |

| First 3 only | 0.9.1 | 100KB × 3 | none | 3.0s | 19.6s | 383KB |

| First 3 + tail 10KB | 0.9.1 | 100KB × 3 | 10KB × rest | 2.7s | 18.7s | 2.8MB |

The “first 3 only” approach had a problem: after the 3 padded events, Lambda resumed buffering the small events. Log analysis showed a 13-second gap between event #3 and event #4 — the exact same buffering behavior, just delayed.

Adding 10KB tail padding solved this. Events now flow continuously with ~1s spacing throughout the stream. TTFB dropped from 13.8s to 2.7s, and the total response time is comparable to baseline (the padding adds negligible processing overhead).

The download size increased from 81KB to 2.8MB — a 35x increase. For a streaming API where responsiveness matters more than bandwidth, this is an acceptable trade-off. The SSE comments are stripped by the client parser and never reach application code.

The Implementation

The padding lives in SSEGenerator._format_event():

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

def _format_event(self, event_type: str, data: dict) -> str:

self._sequence += 1

envelope = {

"event": event_type,

"seq": self._sequence,

"timestamp": datetime.now(UTC).isoformat().replace("+00:00", "Z"),

"data": data,

}

event_str = f"event: {event_type}\ndata: {json.dumps(envelope)}\n\n"

# First N events get full padding to break Lambda's initial buffer

if self._padding and self._flush_event_count < self._flush_max_events:

self._flush_event_count += 1

return event_str + self._padding

# Remaining events get smaller tail padding to keep the stream flowing

if self._tail_padding:

return event_str + self._tail_padding

return event_str

Configuration is environment-driven via pydantic-settings:

1

2

3

4

# config.py

stream_flush_padding_kb: int = 100 # Head padding size

stream_flush_max_events: int = 3 # Events that get head padding

stream_flush_tail_padding_kb: int = 10 # Tail padding for remaining events

Lessons Learned

Lambda Response Streaming is not the same as HTTP streaming. Traditional web servers flush when you tell them to. Lambda’s streaming runtime has its own buffering logic that you cannot control directly. If your chunks are small, they will be held until the buffer fills or the stream ends.

The SSE comment spec is your friend. The : prefix for comments in SSE isn’t just for documentation — it’s a legitimate mechanism for padding that compliant clients handle correctly. This is the same technique used by some CDNs to keep connections alive.

Test locally AND in Lambda. Our FastAPI app streamed perfectly on localhost. The buffering only manifested inside Lambda. If we’d only tested locally, we’d never have found this. Measure TTFB, not just total time. Total response time was similar across all configurations (~17-20s). The difference was entirely in when the client started receiving data. For streaming APIs, TTFB is the metric that matters for perceived performance.

Systematic isolation testing works. By changing one variable at a time (LWA version, padding on/off, padding count, padding size), we conclusively identified the root cause. Guessing would have led us down the wrong path — our first instinct was API Gateway, which turned out to be innocent.

Is There a Better Way?

As of early 2026, AWS does not expose a flush API for Lambda Response Streaming. The padding workaround is the only reliable way to force immediate delivery of small chunks. A few alternatives we considered:

- Batching events: Accumulate multiple SSE events into larger chunks. This defeats the purpose of streaming — the client wants events as they happen.

- Lambda Function URLs: Removes API Gateway from the path, but the buffering is in the Lambda runtime itself. Same problem.

- HTTP API Gateway: Supports streaming natively, but doesn’t support Private endpoints (VPC-only access), which was a hard requirement for us.

- WebSockets via API Gateway: Different protocol, different client implementation, and adds connection management complexity.

The padding approach is ugly but effective. It’s transparent to clients, configurable per environment, and adds minimal latency. Sometimes the pragmatic fix wins.

References:

https://betterdev.blog/aws-lambda-response-streaming-pitfalls/ https://docs.aws.amazon.com/lambda/latest/dg/response-streaming-tutorial.html